Short Tutorial - AI Agent with Python, Gemini, and LangChain

How AI agents can be built in just a few simple steps.

Sections

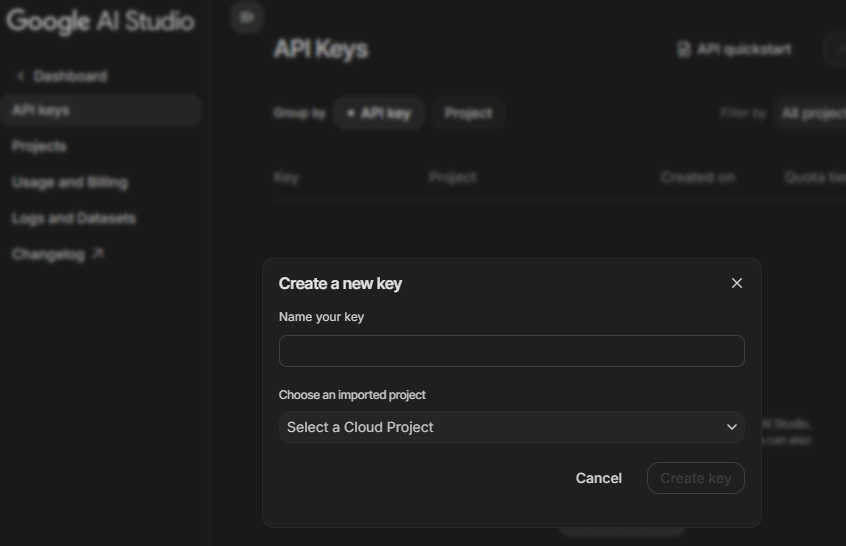

Getting an API Key

At the time of writing, anyone can easily go to www.chat.com to chat with OpenAI’s models. But we will need an API key in order to interact with those models programmatically. It is easy to create an OpenAI platform account and then generate an API key. It used to be pay-as-you-go, but at the time of writing, it requires payment up front to use.

We will instead use Google AI Studio, which has a free tier with free credits and does not require an upfront payment. If you prefer to use OpenAI, they have an excellent quickstart guide.

Google AI Studio has a well-written Python quickstart guide as well. It is extremely easy to make your first API call without spending a single cent or even providing your credit card information!

1# pip install google-genai

2

3from google import genai

4

5client = genai.Client(api_key='YOUR_API_KEY')

6

7response = client.models.generate_content(

8 model='gemini-2.5-flash', contents="'Explain how AI works in a few words'

9)

10print(response.text)At the time of writing, they have a very generous free tier that caps the request/token per minute/day, but no expiry date or hard overall limits. All of these without having a credit card on file!

What is an AI Agent Anyway?

You are probably wondering what the difference is between the usual chatbot web interface, say www.chat.com, and this thing called an “AI agent”. The grand vision is to have autonomous intelligent software independently doing any work that you specify. But we are nowhere near that yet.

Within the world of Large Language Models (LLM), the key difference between the chat interface and an AI agent is just the ability to use “tools”. These tools are just regular functions that the LLM can call.

Now you are probably wondering how a text chatbot can call functions. Of course, LLMs only take in text and output text. They do not have the ability to run software and call functions. At the time of writing, tool calling is done using external software that watches the LLM’s text output for certain triggers. The external software then calls the function indicated in the text trigger. In software engineering lingo, the external software is a wrapper for the LLM.

It is important to note that the function calling is done outside of the LLM by external software. For our very simple AI agent, we will be using the popular LangChain package. Fun fact: the word “chain” in LangChain refers to a chain of function calls, where the output of one function is the input of the next function.

Tool Calling with LangChain

LangChain has a great guide for working with Google Gemini and Python. First, we set up Gemini in LangChain and test if it runs correctly.

1# pip install langchain-google-genai

2

3from langchain_google_genai import ChatGoogleGenerativeAI

4

5llm = ChatGoogleGenerativeAI(

6 model='gemini-2.5-flash',

7 temperature=0.1,

8 google_api_key='YOUR_API_KEY'

9)

10

11messages = [

12 ('system', 'Translate the user sentence to French.'),

13 ('human', 'I love programming.'),

14]

15

16llm.invoke(messages)Next, we try running this template from LangChain’s quickstart guide, with some slight modifications.

1from langchain.agents import create_agent

2

3def get_weather(city: str) -> str:

4 """Get weather for a given city."""

5 return f"It's always sunny in {city}!"

6

7agent = create_agent(

8 model=llm,

9 tools=[get_weather],

10 system_prompt="You are a helpful assistant",

11)

12

13# Run the agent

14output = agent.invoke(

15 {"messages": [{"role": "user", "content": "what is the weather in sf"}]}

16)

17

18print('user prompt : ', output['messages'][0].content)

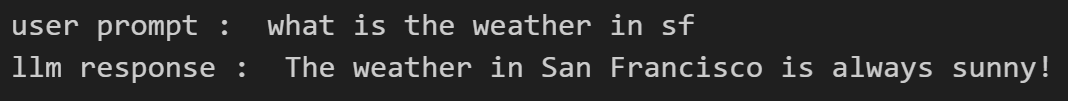

19print('llm response : ', output['messages'][-1].content)As we can see from the output below, the user asked the LLM agent for the weather in sf. The LLM agent was able to understand this enough to call the get_weather function with San Francisco as input.

Note that the output of the function is passed through the LLM again, in order to generate a response. The LLM agent might not repeat the output wholesale.